Why Embedding Models matter: a hard-earned lesson from building local AI RAG systems

Embeddings: the underrated foundation of AI RAG systems

🚀What an old hardware taught me about embeddings

Recently, I decided to breathe new life into my 10-year-old Surface Pro 3 by installing Linux on it. To my surprise, this old device turned out to be perfectly capable of running small, locally hosted large language models (LLMs) - for example, IBM’s Granite series, in order to have a local AI system.

Yes, really! With 8 GB of RAM, an Intel i7 (4th Gen), and no GPU, you can run compact LLMs at quite an acceptable speed.

However, during this experiment I learned a very important lesson about Retrieval-Augmented-Generation (RAG) - and it completely changed how I think about embeddings and context preparation.

💡The key realization

When building AI RAG solutions locally, using orchestration frameworks such as LangChain, LlamaIndex, or Haystack, I discovered that while general-purpose LLMs can generate embeddings, they’re far from optimal for that task.

That was my “aha” moment. Specialized embedding models like nomic-embed-text or all-MiniLM exist for a reason - and using them makes all the difference.

🥼The experiment

I use Ollama to run LLMs locally. For testing, I downloaded the relatively small IBM Granite4:micro-h (1.9 GB) model.

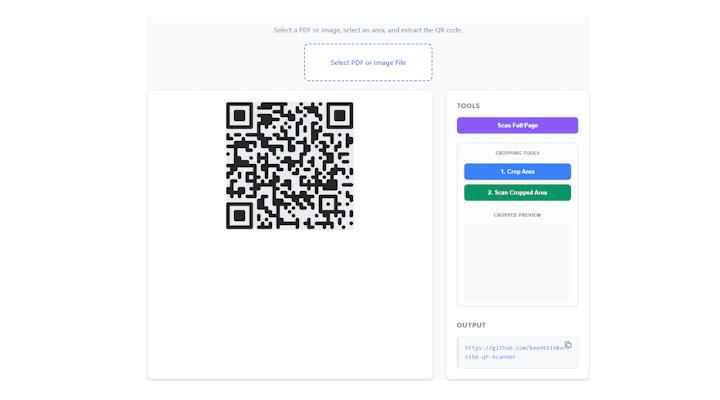

I started with a simple prototype in LangChain:

Create an embedding object

Specify Granite 4 as the embedding model

Generate a vector for the text “Hello, world”

Everything worked smoothly. Encouraged, I added a memory store and implemented a similarity search.

Then I decided to use something more substantial - a full Wikipedia article as context. That’s when things got interesting.

After launching the server and sending a request… - I waited. And waited. And waited some more. Eventually, LangChain timed out.

At first, I assumed it was a framework issue. So I rebuilt the same solution using LlamaIndex. Same result. That’s when I realized the issue wasn’t with the frameworks at all.

The problem was the embedding model. I was using a general LLM instead of a specialized embedding model.

Once I switched to a proper embedding model, everything changed - it worked beautifully!

✅ 70 KB of text (roughly 17K tokens) processed (embed) in about 3 minutes.

✅ Splitting the text into smaller chunks with a bit of overlap further improved performance and efficiency.

🧭The takeaway

The lesson here is simple but powerful:

👉 Embedding models are critical for preparing your data before retrieval and generation.

They’re not just an optional optimization - they’re the foundation of a well-performing RAG system.

Embedding models are purpose-built for exactly this - creating embedding vectors. Unlike general LLMs (like LLaMA), which are designed primarily to generate text, embedding models are trained to represent the meaning of text in a compact, multi-dimensional vector space. Each dimension captures subtle aspects of context, similarity, and relationships between words or phrases, allowing the model to “understand” how pieces of text relate to each other.

Tokens play a crucial role here: the model breaks text into discrete tokens and maps them into vectors, preserving semantic relationships across even large inputs. This careful token-level representation makes embedding models not only faster but also far more reliable, especially when your RAG system depends on accurate similarity searches and retrieval. In short, embeddings turn text into a numerical language the model can reason over efficiently - something general-purpose LLMs aren’t optimized for.

🔜What’s next

Along the way, I created an introductory presentation for my (non-technical) colleagues that explains how large language models (LLMs) work, with a hands-on demo of a Retrieval-Augmented-Generation (RAG) system build using an orchestration framework. The overview also covers how these frameworks operate and why they're useful for building and managing complex AI workflows (tying together key components like embedding generation, similarity search, and LLM querying into a seamless workflow).

In my next post, I’ll walk through the technical side - including the code and setup - for anyone who wants to experiment with local LLMs on modest hardware - stay tuned.